neural networks

This is an artificial intelligence powered upscale and remastering of the 1985 Thundercats cartoon intro (previously: this absolutely stunning CGI recreation of the intro). Like whenever I wear a suit, it looks GOOD. “But you never wear a suit.” A bathing suit. “Oh my.” Dare me to jump off the

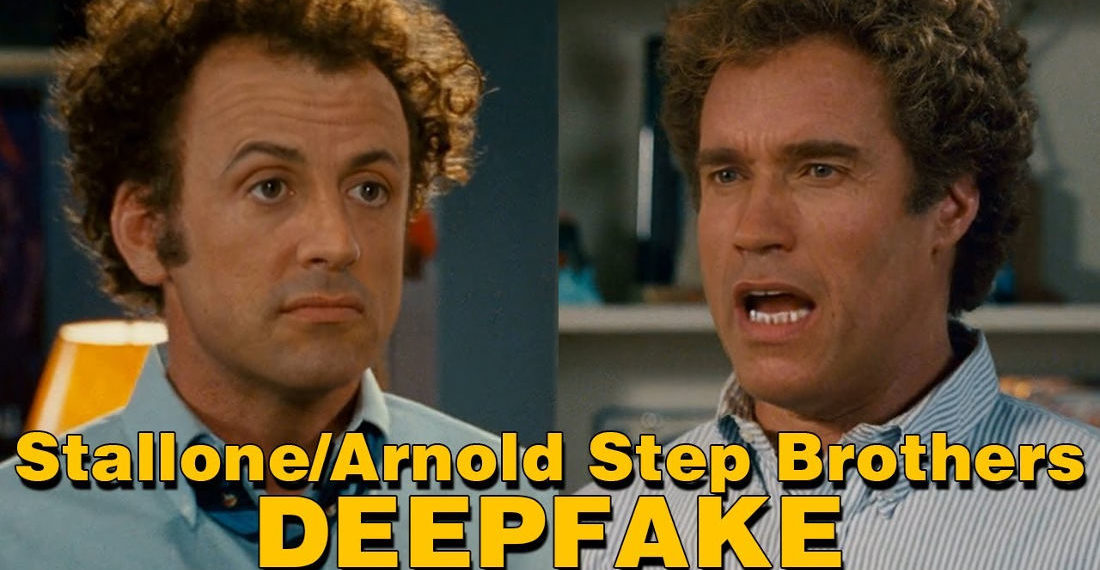

In I’m honestly surprised it took this long news, this is a video of Sylvester Stallone and Arnold Schwarzenegger as Dale Doback (John C. Reilly) and Brennan Huff (Will Ferrell) in Step Brothers, as deepfaked by Brian Monarch. It works unsurprisingly well, presumably because Stallone and Schwarzenegger actually are stepbrothers

This is Bataille de boules de neige (Snowball Fight) by Louis Lumière, a short silent film shot in Lyon, France in 1896 that’s been upscaled and colorized using DeOldify, an open-source AI tool that does just that. The result is impressive. Also, I really liked the plot: everybody nail the

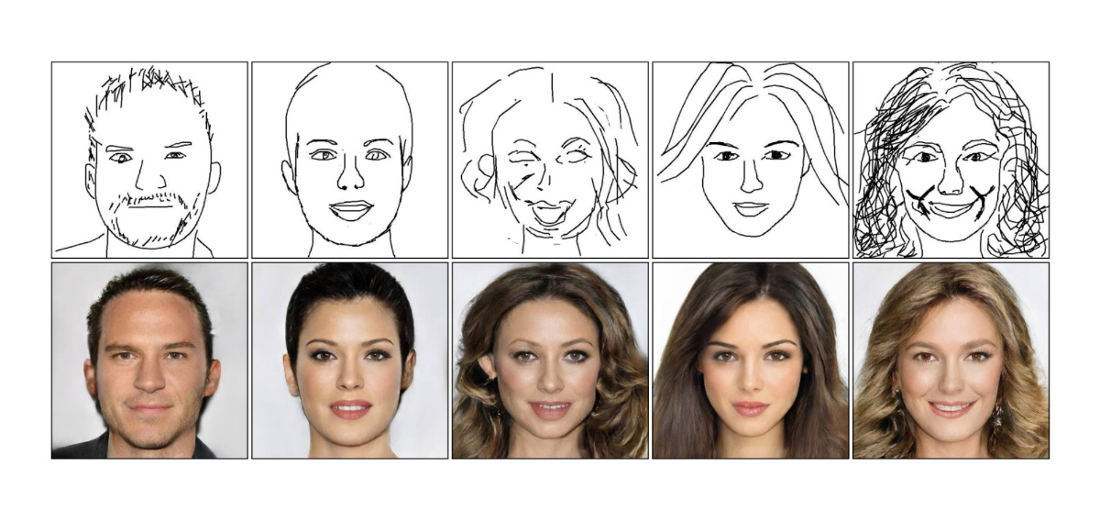

This is a video demonstration of DeepFaceDrawing, an artificial intelligence system that creates photorealistic portraits from really crappy drawings, and *eyeing example photo* I mean REALLY crappy. So, the next time you need to generate yourself a fake online boyfriend or girlfriend (without risking stealing someone’s Instagram photos) so your

Because the end can’t come soon enough, this is a video of Gollum deepfaked to perform Scatman John’s ‘The Scatman’. It’s pretty terrifying just how good it is. Thankfully *mixing Kool-Aid, tastes with pinky it will all be over soon. “Add more of the skull and crossbones powder.” More pirate

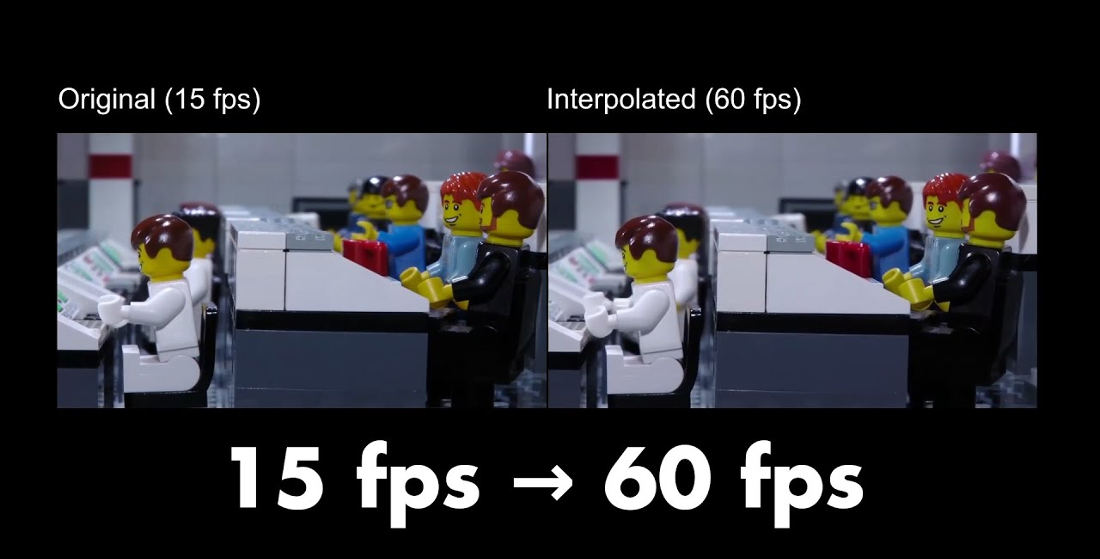

This is a video of Youtuber LegoEddy discussing and exhibiting the Apollo 11 stop motion LEGO movie he made that was originally shot at 15 frames per second, which he then boosted to 60FPS using DAINAPP (Depth-Aware video frame INterpolation), a neural network that predicts new frames to increase the

Because this is the internet, here’s a video of a bunch of different movie characters deepfaked to perform Smash Mouth’s ‘All Star’. The video was created by Youtuber ontyj utilizing Wav2Lip, a neural network that uses existing video of human faces speaking to match a different audio source. The result

This is a video of Rasul Hasan providing a tutorial for how to use the AnimeGAN2 neural network to render real-life photos into anime-like scenes. In this case, Rasul trained the neural network with data sets from the movies of directors Hayao Miyazaki, Makoto Shinkai, and Satoshi Kon. Like working

Things people say

- Eric Ord on Find The Frog

- Kent on Maserati SUV Driver Mistakes His Vehicle For Functional Off Roader, Gets Stuck In Flood

- born in space on Mouse Sneaks Out Of Hiding Spot In Stove To Steal An OREO

- 1-Ton on Footage Of Statue Of Liberty Shaking During The Recent Earthquake

- Enkidu98 on Pacers Use Filter To Make Lakers Fans Cry On Jumbotron During Defeat

- April 16, 2024

- April 16, 2024

- April 15, 2024

- April 15, 2024